GStreamer: Difference between revisions

No edit summary |

m (Minor fixes) |

||

| (59 intermediate revisions by 3 users not shown) | |||

| Line 1: | Line 1: | ||

GStreamer is a toolkit for building audio- and video-processing pipelines. A pipeline might stream video from a file to a network, or add an echo to a recording, or (most interesting to us) capture the output of a Video4Linux device. Gstreamer is most often used to power graphical applications such as [https://wiki.gnome.org/Apps/Videos Totem], but can also be used directly from the command-line. This page will explain how GStreamer is better than the alternatives, and how to build an encoder using its command-line interface. |

|||

GStreamer is a multimedia processing library which front end applications can leverage in order to provide a wide variety of functions such as audio and video playback, streaming, non-linear video editing and V4L2 capture support. It is also available for many other platforms for example for windows. |

|||

It is mainly used as API working in the background of applications as Totem. But with the comman-line-tools gst-launch and entrans it is also possible to directly create and use gstreamer-piplines on the commandline. |

|||

'''Before reading this page''', see [[V4L_capturing|V4L capturing]] to set your system up and create an initial recording. This page assumes you have already implemented the simple pipeline described there. |

|||

==Getting GStreamer== |

|||

GStreamer, the most common GStreamer-plugins and the most important tools like ''gst-launch'' are available through your disttribution's package management. But entrans and some of the plugins used in the examples below are not. You can find their sources bundled by the [http://sourceforge.net/projects/gentrans/files/gst-entrans/ GEntrans project] at sourceforge. Google may help you to find precompiled packages for your distro. |

|||

There are the old GStreamer 0.10 and the new GStreamer 1.0. They are incompatible but can be installed parallel. Everything said below can be done with both GStreamer 0.10 and GStreamer 1.0. You simply have to use the appropriate commands, e.g. gst-launch-0.10 vs. gst-launch-1.0 (gst-launch is linked to one of them). Note the ''ff(mpeg)''-plugins have been renamed to ''av''. For example ''ffenc_mp2'' of GStreamer 0.10 is called ''avenc_mp2'' in GStreamer 1.0. |

|||

== Introduction to GStreamer == |

|||

== Entrans versus gst-launch== |

|||

gst-launch is better documented and part of all distributions. But entrans is a bit smarter for the following reasons: |

|||

* It provides partly automatically composing of GStreamer pipelines |

|||

* It allows cutting of the streams; the most simple application of this feature is to capture for a distinct time. That allows the muxers to properly close the captured files writing correct headers which is not always given if you finish capturing with gst-launch by simply typing Ctrl+C. To use this feature one has to insert a ''dam'' element after the first ''queue'' of each part of the pipeline. |

|||

No two use cases for encoding are quite alike. What's your preferred workflow? Is your processor fast enough to encode high quality video in real-time? Do you have enough disk space to store the raw video then process it after the fact? Do you want to play your video in DVD players, or is it enough that it works in your version of [http://www.videolan.org/vlc/index.en_GB.html VLC]? How will you work around your system's obscure quirks? |

|||

==Using GStreamer for V4L TV capture== |

|||

'''Use GStreamer if''' you want the best video quality possible with your hardware, and don't mind spending a weekend browsing the Internet for information. |

|||

===Why preferring GStreamer?=== |

|||

Despite the fact that GStreamer is much more flexible than other solutions most other tools especially those which are based on the ffmpeg library (e.g. mencoder) have by design difficulties in managing A/V-synchronisation: The common tools process the audio and the video stream independently frame by frame as frames come in. Afterwards they are muxed relying on their specified framerates. E.g. if you have a 25fps video stream and a 48,000kHz audio stream it simply takes 1 video frame, 1920000 audio frames, 1 video frame and so on. This probably leeds to sync issues for at least three reasons: |

|||

* If frames get dropped audio and video shift against each other. For example if your CPU is not fast enough and sometimes drops a video frame after 25 dropped audio frames the video is one second in advance. (Using mencoder this can be fixed by usindg the ''-harddup'' option in most situations. It causes mencoder to wath if video frames are dropped and to copy other frames to fill the gaps before muxing.) |

|||

* The audio and video devices need different time to start the streams. For example after you have started capturing the first audioframe is grabbed 0.01 seconds thereafter while the first videoframe is grabbed 0.7 seconds thereafter. This leads to constant time-offset between audio and video. (Using mencoder this can be fixed by using the ''-delay'' option. But you have to find out the appropriate delay by try and error. It won't be very precise. Another problem is that things very often change if you update your software as for example the buffers and timeouts of the drivers and the audioframework change. So you have to do it again and again after each update.) |

|||

* If the clocks of your audio source and your video source are not accurate enough (very common on low-cost home-user-products) and your webcam sends 25.02 fps instead of 25 fps and your audio source delivers 47,999kHz instead of 48,000kHz audio and video are going to slowly shift by time. The result is that after an hour or so of capturing audio and video differ by a second or so. But that's not only an issue of inaccurate clocks. Analogue TV is not completely analogue but the frames are discrete. Therefore video-capturing devices can't rely on their internal clocks but have to synchronize to the incoming signal. That's no problem if capturing live TV, but if you capture the signal of a VHS recorder. These recorders can't instantly change the speed of the tape but need to slightly adjust the fps for accurate tracking, especially if the quality of the tape is bad. (This issue can't be addressed using mencoder.) |

|||

To be more robust and accurate GStreamer attaches a timestamp to each incoming frame in the very beginning using the PC's clock. In the end muxing is done according to these timestamps. |

|||

Many container-formats for example Matroska support timestamps. Therefore the GStreamer timestamps are written to the file when cepturing to those formats. Others like avi can't handle timestamps. If you write streams with varying framerate (for example due to framedrops) to those files they are played back with varying speed (but nevertheless correct sync) as the timestamps get lost. To prevent this you have to use the videorate-plugin in the end of the pipeline's video part and the ''audiorate''-plugin in the end of the pipeline's audio part which produce streams with constant framerates by copying and dropping frames according to the timestamps. |

|||

To further improve the A/V-sync one should use the ''do-timestamp=true'' option for each capturing source. It gets the timestamps from the drivers instead of letting GStreamer do the stamping. This is even more accurate as effects of the hardware-buffering are also taken into account. Even if the source doesn't support this (e.g. many v4l2-drivers doesn't support timestamping) there is no harm in doing this as GStreamer falls back to the normal behaviour in those cases. |

|||

'''Avoid GStreamer if''' you just want something quick-and-dirty, or can't stand programs with bad documentation and unhelpful error messages. |

|||

===Common caputuring issues and their solutions=== |

|||

====Jerking==== |

|||

* If your hardware is not fast enough you probably get framedrops. An indication is high CPU load. If there are only few framedrops you can try to address the issue by enlarging the buffers. (If that doesn't help you have to change the codecs or its parameters and / or lower the resolution.) You have to enlarge the queues as well as the buffers of your sources, e.g. ''queue max-size-buffers=0 max-size-time=0 max-size-bytes=0'', ''v4l2src queue-size=16'', ''pulsesrc buffer-time=2000000'' Even if not necessary increasing the buffer-sizes doesn't do any harm. Big buffers only increase the pipeline's latency which doesn't matter for capturing purposes. |

|||

* Some muxers need big chunks of data at once. Therefore situations happen in which the muxer waits for example for more audio data and doesn't process any videodata. If this takes longer than filling up all the video buffers of the pipeline video frames get dropped. To prevent the resulting jerking one should enlarge all the buffers. ''queue max-size-buffers=0 max-size-time=0 max-size-bytes=0'' should disable any size-restrictions of the queue. But in fact there seems to be a hard-coded restriction in some cases. If needed you can work around this issue by adding additional ''queue'' elements to the pipeline. You can try out if to small buffers are the reason for your jerking by changing the pipeline to write the audio and the video stream into different files instead of muxing them together. If these files don't judder to small buffers are your problem. |

|||

* Many v4l2-drivers don't support timestamping. So even if ''do-timestamping=true'' is given GStreamer has to do the timestamping when it gets the frames from the driver. Most drivers tend to fill up their internal buffers and pass many frame as a cluster. This makes GStreamer giving all the frames of such a cluster (nearly) the same timestamp. As those inaccuracies are typically very small this doesn't disturb A/V sync but leads to Jerking as the frames are played back in clusters if videorate isn't used. If videorate is used Jerking is even worse as videorate relying on the inaccurate timestamps drops all but one frame of each cluster and copies this frame to fill the gaps. If videorate is used with the ''silent=false'' option it reports many framedrops and framecopies even if the CPU load is low. To solve this problem use the ''stamp'' plugin between ''v4l2src'' and ''queue''. For example ''v4l2src do-timestamp=true ! stamp sync-margin=2 sync-interval=5 ! queue''. The stamp plugin inspects the buffers and uses a smart algorithm to correct the timestamps if the drivers doesn't support timestamping. Using the sync options ''stamp'' can additionally drop or copy frames to get a close to constant framerate. In most cases this doesn't completely replace videorate. It's safe to use videorate in addition. |

|||

=== Why is GStreamer better at encoding? === |

|||

====Capturing of disturbed video signals==== |

|||

* Most video capturing devices send EndOfStream singnals if the quality of the input signal is too bad or if there is a period of snow. This aborts the capturing process. To prevent the device from sending EOS set ''num-buffers=-1'' on the ''v4l2src'' element. |

|||

* The ''stamp'' plugin gets confused by periods of snow and does faulty timestamps and framedropping. This effect itself doesn't matter as stamp recovers normal behaviour when the brake is over. But chances are good that the buffers are full of old weird stamped frames. ''stamp'' then drops only one of them each sync-intervall with the result that it can take a quite long time (minutes) until everything works fine again. To solve this problem set ''leaky=2'' on each ''queue'' element to allow dropping of old frames which aren't needed any longer. |

|||

* If using variable bitrate for encoding the bitrate increases very much during periods of bad signal quality or snow. Afterwards the codec uses a very low bitrate to reach the desired average bitrate resulting in poor quality. To prevent this and stay in the limits that are allowed for the purpose you aim at don't forget to specify a maximum and a minimum for the variable bitrate. |

|||

GStreamer isn't as easy to use as <code>mplayer</code>, and doesn't have as advanced editing functionality as <code>ffmpeg</code>. But it has superior support for synchronising audio and video in disturbed sources such as VHS tapes. If you specify your input is (say) 25 frames per second video and 48,000Hz audio, most tools will synchronise audio and video simply by writing 1 video frame, 1,920 audio frames, 1 video frame and so on. There are at least three ways this can cause errors: |

|||

===Sample commands=== |

|||

* '''initialisation timing''': audio and video desynchronised by a certain amount from the first frame, usually caused by audio and video devices taking different amounts of time to initialise. For example, the first audio frame might be delivered to GStreamer 0.01 seconds after it was requested, but the first video frame might not be delivered until 0.7 seconds after it was requested, causing all video to be 0.6 seconds behind the audio |

|||

====Record to ogg theora==== |

|||

** <code>mencoder</code>'s ''-delay'' option solves this by delaying the audio |

|||

* '''failure to encode''': frames that desynchronise gradually over time, usually caused by audio and video shifting relative to each other when frames are dropped. For example if your CPU is not fast enough and sometimes drops a video frame, after 25 dropped frames the video will be one second ahead of the audio |

|||

** <code>mencoder</code>'s ''-harddup'' option solves this by duplicating other frames to fill in the gaps |

|||

* '''source frame rate''': frames that aren't delivered at the advertised rate, usually caused by inaccurate clocks in the source hardware. For example, a low-cost webcam that advertises 25 FPS video and 48kHz audio might actually deliver 25.01 video frames and 47,999 audio frames per second, causing your audio and video to drift apart by a second or so per hour |

|||

** video tapes are especially problematic here - if you've ever seen a VCR struggle during those few seconds between two recordings on a tape, you've seen them adjusting the tape speed to accurately track the source. Frame counts can vary enough during these periods to instantly desynchronise audio and video |

|||

** <code>mencoder</code> has no solution for this problem |

|||

GStreamer solves these problems by attaching a timestamp to each incoming frame based on the time GStreamer receives the frame. It can then mux the sources back together accurately using these timestamps, either by using a format that supports variable framerates or by duplicating frames to fill in the blanks: |

|||

gst-launch-0.10 oggmux name=mux ! filesink location=test0.ogg v4l2src device=/dev/video2 ! \ |

|||

# If you choose a container format that supports timestamps (e.g. Matroska), timestamps are automatically written to the file and used to vary the playback speed |

|||

video/x-raw-yuv,width=640,height=480,framerate=\(fraction\)30000/1001 ! ffmpegcolorspace ! \ |

|||

# If you choose a container format that does not support timestamps (e.g. AVI), you must duplicate other frames to fill in the gaps by adding the <code>videorate</code> and <code>audiorate</code> plugins to the end of the relevant pipelines |

|||

theoraenc ! queue ! mux. alsasrc device=hw:2,0 ! audio/x-raw-int,channels=2,rate=32000,depth=16 ! \ |

|||

audioconvert ! vorbisenc ! mux. |

|||

=== Getting GStreamer === |

|||

The files will play in mplayer, using the codec Theora. Note the required workaround to get sound on a saa7134 card, which is set at 32000Hz (cf. [http://pecisk.blogspot.com/2006/04/alsa-worries-countinues.html bug]). However, I was still unable to get sound output, though mplayer claimed there was sound -- the video is good quality: |

|||

GStreamer, its most common plugins and tools are available through your distribution's package manager. Most Linux distributions include both the legacy ''0.10'' and modern ''1.0'' release series - each has bugs that stop them from working on some hardware, and this page focuses mostly on the modern ''1.0'' series. Converting between ''0.10'' and ''1.0'' is mostly just search-and-replace work (e.g. changing instances of <code>av</code> to <code>ff</code> because of the switch from <code>ffmpeg</code> to <code>libavcodec</code>). See [http://gstreamer.freedesktop.org/data/doc/gstreamer/head/manual/html/chapter-porting-1.0.html the porting guide] for more. |

|||

VIDEO: [theo] 640x480 24bpp 29.970 fps 0.0 kbps ( 0.0 kbyte/s) |

|||

Selected video codec: [theora] vfm: theora (Theora (free, reworked VP3)) |

|||

AUDIO: 32000 Hz, 2 ch, s16le, 112.0 kbit/10.94% (ratio: 14000->128000) |

|||

Selected audio codec: [ffvorbis] afm: ffmpeg (FFmpeg Vorbis decoder) |

|||

Other plugins are also available, such as <code>[http://gentrans.sourceforge.net/ GEntrans]</code> (used in some examples below). Google might help you find packages for your distribution, otherwise you'll need to download and compile them yourself. |

|||

====Record to mpeg4==== |

|||

=== Using GStreamer with gst-launch-1.0 === |

|||

Or mpeg4 with an avi container (Debian has disabled ffmpeg encoders, so install Marillat's package or use example above): |

|||

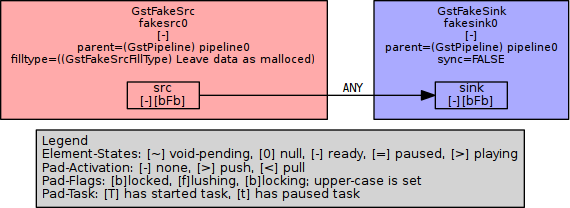

<code>gst-launch</code> is the standard command-line interface to GStreamer. Here's the simplest pipline you can build: |

|||

gst-launch-0.10 avimux name=mux ! filesink location=test0.avi v4l2src device=/dev/video2 ! \ |

|||

video/x-raw-yuv,width=640,height=480,framerate=\(fraction\)30000/1001 ! ffmpegcolorspace ! \ |

|||

ffenc_mpeg4 ! queue ! mux. alsasrc device=hw:2,0 ! audio/x-raw-int,channels=2,rate=32000,depth=16 ! \ |

|||

audioconvert ! lame ! mux. |

|||

gst-launch-1.0 fakesrc ! fakesink |

|||

I get a file out of this that plays in mplayer, with blocky video and no sound. Avidemux cannot open the file. |

|||

This connects a single (fake) source to a single (fake) sink using the 1.0 series of GStreamer: |

|||

====Record to DVD-compliant MPEG2==== |

|||

[[File:GStreamer-simple-pipeline.png|center|Very simple pipeline]] |

|||

entrans -s cut-time -c 0-180 -v -x '.*caps' --dam -- --raw \ |

|||

v4l2src queue-size=16 do-timestamp=true device=/dev/video0 norm=PAL-BG num-buffers=-1 ! stamp silent=false progress=0 sync-margin=2 sync-interval=5 ! \ |

|||

queue silent=false leaky=2 max-size-buffers=0 max-size-time=0 max-size-bytes=0 ! dam ! \ |

|||

cogcolorspace ! videorate silent=false ! \ |

|||

'video/x-raw-yuv,width=720,height=576,framerate=25/1,interlaced=true,aspect-ratio=4/3' ! \ |

|||

queue silent=false leaky=2 max-size-buffers=0 max-size-time=0 max-size-bytes=0 ! \ |

|||

ffenc_mpeg2video rc-buffer-size=1500000 rc-max-rate=7000000 rc-min-rate=3500000 bitrate=4000000 max-key-interval=15 pass=pass1 ! \ |

|||

queue silent=false leaky=2 max-size-buffers=0 max-size-time=0 max-size-bytes=0 ! mux. \ |

|||

pulsesrc buffer-time=2000000 do-timestamp=true ! \ |

|||

queue silent=false leaky=2 max-size-buffers=0 max-size-time=0 max-size-bytes=0 ! dam ! \ |

|||

audioconvert ! audiorate silent=false ! \ |

|||

audio/x-raw-int,rate=48000,channels=2,depth=16 ! \ |

|||

queue silent=false max-size-buffers=0 max-size-time=0 max-size-bytes=0 ! \ |

|||

ffenc_mp2 bitrate=192000 ! \ |

|||

queue silent=false leaky=2 max-size-buffers=0 max-size-time=0 max-size-bytes=0 ! mux. \ |

|||

ffmux_mpeg name=mux ! filesink location=my_recording.mpg |

|||

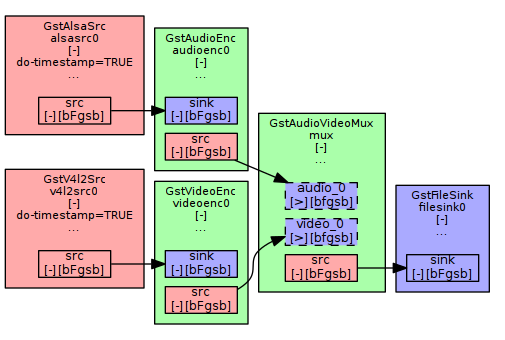

GStreamer can build all kinds of pipelines, but you probably want to build one that looks something like this: |

|||

This captures 3 minutes (180 seconds, see first line of the command) to ''my_recording.mpg'' and even works for bad input signals. |

|||

[[File:Example-pipeline.png|center|Idealised pipeline example]] |

|||

To get a list of elements that can go in a GStreamer pipeline, do: |

|||

gst-inspect-1.0 | less |

|||

Pass an element name to <code>gst-inspect-1.0</code> for detailed information. For example: |

|||

gst-inspect-1.0 fakesrc |

|||

gst-inspect-1.0 fakesink |

|||

The images above are based on graphs created by GStreamer itself. Install [http://www.graphviz.org Graphviz] to build graphs of your pipelines. For faster viewing of those graphs, you may install xdot from [http://www.semicomplete.com/projects/xdotool/]: |

|||

mkdir gst-visualisations |

|||

GST_DEBUG_DUMP_DOT_DIR=gst-visualisations gst-launch-1.0 fakesrc ! fakesink |

|||

xdot gst-visualisations/*-gst-launch.*_READY.dot |

|||

You may also compiles those graph to PNG, SVG or other supported formats: |

|||

dot -Tpng gst-visualisations/*-gst-launch.*_READY.dot > my-pipeline.png |

|||

To get graphs of the example pipelines below, prepend <code>GST_DEBUG_DUMP_DOT_DIR=gst-visualisations </code> to the <code>gst-launch-1.0</code> command. Run this command to generate a graph of GStreamer's most interesting stage: |

|||

xdot gst-visualisations/*-gst-launch.PLAYING_READY.dot |

|||

Remember to empty the <code>gst-visualisations</code> directory between runs. |

|||

=== Using GStreamer with entrans === |

|||

<code>gst-launch-1.0</code> is the main command-line interface to GStreamer, available by default. But <code>entrans</code> is a bit smarter: |

|||

* it provides partly-automated composition of GStreamer pipelines |

|||

* it allows you to cut streams, for example to capture for a predefined duration. That ensures headers are written correctly, which is not always the case if you close <code>gst-launch-1.0</code> by pressing Ctrl+C. To use this feature one has to insert a ''dam'' element after the first ''queue'' of each part of the pipeline |

|||

== Building pipelines == |

|||

You will probably need to build your own GStreamer pipeline for your particular use case. This section contains examples to give you the basic idea. |

|||

Note: for consistency and ease of copy/pasting, all filenames in this section are of the form <code>test-$( date --iso-8601=seconds )</code> - your shell should automatically convert this to e.g. <code>test-2010-11-12T13:14:15+1600.avi</code> |

|||

=== Record raw video only === |

|||

A simple pipeline that initialises one video ''source'', sets the video format, ''muxes'' it into a file format, then saves it to a file: |

|||

gst-launch-1.0 \ |

|||

v4l2src device=$VIDEO_DEVICE \ |

|||

! $VIDEO_CAPABILITIES \ |

|||

! avimux \ |

|||

! filesink location=test-$( date --iso-8601=seconds ).avi |

|||

This will create an AVI file with raw video and no audio. It should play in most software, but the file will be huge. |

|||

=== Record raw audio only === |

|||

A simple pipeline that initialises one audio ''source'', sets the audio format, ''muxes'' it into a file format, then saves it to a file: |

|||

gst-launch-1.0 \ |

|||

alsasrc device=$AUDIO_DEVICE \ |

|||

! $AUDIO_CAPABILITIES \ |

|||

! avimux \ |

|||

! filesink location=test-$( date --iso-8601=seconds ).avi |

|||

This will create an AVI file with raw audio and no video. |

|||

=== Record video and audio === |

|||

gst-launch-1.0 \ |

|||

v4l2src device=$VIDEO_DEVICE \ |

|||

! $VIDEO_CAPABILITIES \ |

|||

! mux. \ |

|||

alsasrc device=$AUDIO_DEVICE \ |

|||

! $AUDIO_CAPABILITIES \ |

|||

! mux. \ |

|||

avimux name=mux \ |

|||

! filesink location=test-$( date --iso-8601=seconds ).avi |

|||

Instead of a straightforward pipe with a single source leading into a muxer, this pipe has three parts: |

|||

# a video source leading to a named element (<code>! ''name''.</code> with a full stop means "pipe to the ''name'' element") |

|||

# an audio source leading to the same element |

|||

# a named muxer element leading to a file sink |

|||

Muxers combine data from many inputs into a single output, allowing you to build quite flexible pipes. |

|||

=== Create multiple sinks === |

|||

The <code>tee</code> element splits a single source into multiple outputs: |

|||

gst-launch-1.0 \ |

|||

v4l2src device=$VIDEO_DEVICE \ |

|||

! $VIDEO_CAPABILITIES \ |

|||

! avimux \ |

|||

! tee name=network \ |

|||

! filesink location=test-$( date --iso-8601=seconds ).avi \ |

|||

tcpclientsink host=127.0.0.1 port=5678 |

|||

This sends your stream to a file (<code>filesink</code>) and out over the network (<code>tcpclientsink</code>). To make this work, you'll need another program listening on the specified port (e.g. <code>nc -l 127.0.0.1 -p 5678</code>). |

|||

=== Encode audio and video === |

|||

As well as piping streams around, GStreamer can manipulate their contents. The most common manipulation is to encode a stream: |

|||

gst-launch-1.0 \ |

|||

v4l2src device=$VIDEO_DEVICE \ |

|||

! $VIDEO_CAPABILITIES \ |

|||

! videoconvert \ |

|||

! theoraenc \ |

|||

! queue \ |

|||

! mux. \ |

|||

alsasrc device=$AUDIO_DEVICE \ |

|||

! $AUDIO_CAPABILITIES \ |

|||

! audioconvert \ |

|||

! vorbisenc \ |

|||

! mux. \ |

|||

oggmux name=mux \ |

|||

! filesink location=test-$( date --iso-8601=seconds ).ogg |

|||

The <code>theoraenc</code> and <code>vorbisenc</code> elements encode the video and audio using [https://en.wikipedia.org/wiki/Theora Ogg Theora] and [https://en.wikipedia.org/wiki/Vorbis Ogg Vorbis] encoders. The pipes are then muxed together into an [https://en.wikipedia.org/wiki/Ogg Ogg] container before being saved. |

|||

=== Add buffers === |

|||

Different elements work at different speeds. For example, a CPU-intensive encoder might fall behind when another process uses too much processor time, or a duplicate frame detector might hold frames back while it examines them. This can cause streams to fall out of sync, or frames to be dropped altogether. You can add queues to smooth these problems out: |

|||

gst-launch-1.0 -q -e \ |

|||

v4l2src device=$VIDEO_DEVICE \ |

|||

! queue max-size-buffers=0 max-size-time=0 max-size-bytes=0 \ |

|||

! $VIDEO_CAPABILITIES \ |

|||

! videoconvert \ |

|||

! x264enc interlaced=true pass=quant quantizer=0 speed-preset=ultrafast byte-stream=true \ |

|||

! progressreport update-freq=1 \ |

|||

! mux. \ |

|||

alsasrc device=$AUDIO_DEVICE \ |

|||

! queue max-size-buffers=0 max-size-time=0 max-size-bytes=0 \ |

|||

! $AUDIO_CAPABILITIES \ |

|||

! audioconvert \ |

|||

! flacenc \ |

|||

! mux. \ |

|||

matroskamux name=mux min-index-interval=1000000000 \ |

|||

! queue max-size-buffers=0 max-size-time=0 max-size-bytes=0 \ |

|||

! filesink location=test-$( date --iso-8601=seconds ).mkv |

|||

This creates a file using FLAC audio and x264 video in lossless mode, muxed into in a Matroska container. Because we used <code>speed-preset=ultrafast</code>, the buffers should just smooth out the flow of frames through the pipelines. Even though the buffers are set to the maximum possible size, <code>speed-preset=veryslow</code> would eventually fill the video buffer and start dropping frames. |

|||

Some other things to note about this pipeline: |

|||

* [https://trac.ffmpeg.org/wiki/Encode/H.264 FFmpeg's H.264 page] includes a useful discussion of speed presets (both programs use the same underlying library) |

|||

* <code>quantizer=0</code> sets the video codec to lossless mode (~30GB/hour). Anything up to <code>quantizer=18</code> should not lose information visible to the human eye, and will produce much smaller files (~10GB/hour) |

|||

* <code>min-index-interval=1000000000</code> improves seek times by telling the Matroska muxer to create one ''cue data'' entry per second of playback. Cue data is a few kilobytes per hour, added to the end of the file when encoding completes. If you try to watch your Matroska video while it's being recorded, it will take a long time to skip forward/back because the cue data hasn't been written yet |

|||

== Common caputuring issues and their solutions == |

|||

=== Reducing Jerkiness === |

|||

If motion that should appear smooth instead stops and starts, try the following: |

|||

'''Check for muxer issues'''. Some muxers need big chunks of data, which can cause one stream to pause while it waits for the other to fill up. Change your pipeline to pipe your audio and video directly to their own <code>filesink</code>s - if the separate files don't judder, the muxer is the problem. |

|||

* If the muxer is at fault, add ''! queue max-size-buffers=0 max-size-time=0 max-size-bytes=0'' immediately before each stream goes to the muxer |

|||

** queues have hard-coded maximum sizes - you can chain queues together if you need more buffering than one buffer can hold |

|||

'''Check your CPU load'''. When GStreamer uses 100% CPU, it may need to drop frames to keep up. |

|||

* If frames are dropped occasionally when CPU usage spikes to 100%, add a (larger) buffer to help smooth things out. |

|||

** this can be a source's internal buffer (e.g. ''alsasrc buffer-time=2000000''), or it can be an extra buffering step in your pipeline (''! queue max-size-buffers=0 max-size-time=0 max-size-bytes=0'') |

|||

* If frames are dropped when other processes have high CPU load, consider using [https://en.wikipedia.org/wiki/Nice_(Unix) nice] to make sure encoding gets CPU priority |

|||

* If frames are dropped regularly, use a different codec, change the parameters, lower the resolution, or otherwise choose a less resource-intensive solution |

|||

As a general rule, you should try increasing buffers first - if it doesn't work, it will just increase the pipeline's latency a bit. Be careful with <code>nice</code>, as it can slow down or even halt your computer. |

|||

'''Check for incorrect timestamps'''. If your video driver works by filling up an internal buffer then passing a cluster of frames without timestamps, GStreamer will think these should all have (nearly) the same timestamp. Make sure you have a <code>videorate</code> element in your pipeline, then add ''silent=false'' to it. If it reports many framedrops and framecopies even when the CPU load is low, the driver is probably at fault. |

|||

* <code>videorate</code> on its own will actually make this problem worse by picking one frame and replacing all the others with it. Instead install <code>entrans</code> and add its ''stamp'' element between ''v4l2src'' and ''queue'' (e.g. ''v4l2src do-timestamp=true ! stamp sync-margin=2 sync-interval=5 ! videorate ! queue'') |

|||

** ''stamp'' intelligently guesses timestamps if drivers don't support timestamping. Its ''sync-'' options drop or copy frames to get a nearly-constant framerate. Using <code>videorate</code> as well does no harm and can solve some remaining problems |

|||

=== Avoiding pitfalls with video noise === |

|||

If your video contains periods of [https://en.wikipedia.org/wiki/Noise_(video) video noise] (snow), you may need to deal with some extra issues: |

|||

* Most devices send an EndOfStream signal if the input signal quality drops too low, causing GStreamer to finish capturing. To prevent the device from sending EOS, set ''num-buffers=-1'' on the ''v4l2src'' element. |

|||

* The ''stamp'' plugin gets confused by periods of snow, causing it to generate faulty timestamps and framedropping. ''stamp'' will recover normal behaviour when the break is over, but will probably leave the buffer full of weirdly-stamped frames. ''stamp'' only drops one weirdly-stamped frame each sync-interval, so it can take several minutes until everything works fine again. To solve this problem, set ''leaky=2'' on each ''queue'' element to allow dropping old frames |

|||

* Periods of noise (snow, bad signal etc.) are hard to encode. Variable bitrate encoders will often drive up the bitrate during the noise then down afterwards to maintain the average bitrate. To minimise the issues, specify a minimum and maximum bitrate in your encoder |

|||

* Snow at the start of a recording is just plain ugly. To get black input instead from a VCR, use the remote control to change the input source before you start recording |

|||

=== Investigating bugs in GStreamer === |

|||

GStreamer comes with a extensive tracing system that let you figure-out the problems. Yet, you often need to understand the internals of GStreamer to be able to read those traces. You should read this [https://gstreamer.freedesktop.org/data/doc/gstreamer/head/gstreamer/html/gst-running.html documentation page] for the basic of how the tracing system works. When something goes wrong you should: |

|||

# try and see if there is a useful error message by enabling the ERROR debug level, <code>GST_DEBUG=2 gst-launch-1.0</code> |

|||

# try similar pipelines - reducing to its most minimal form, and add more elements until you can reproduce the issue. |

|||

# as you are most likely having issue with V4L2 element, you may enable full v4l2 traces using <code>GST_DEBUG="v4l2*:7,2" gst-launch-1.0</code>. |

|||

# find an error message that looks relevant, search the Internet for information about it |

|||

# try more variations based on what you learnt, until you eventually find something that works |

|||

# ask on Freenode #gstreamer or through [mailto:gstreamer-devel@lists.freedesktop.org GStreamer Mailing List] |

|||

# if you think you found a bug, you should report it through [https://bugzilla.gnome.org/enter_bug.cgi?product=GStreamer Gnome Bugzilla] |

|||

== Sample pipelines == |

|||

=== record from a bad analog signal to MJPEG video and RAW mono audio === |

|||

gst-launch-1.0 \ |

|||

v4l2src device=$VIDEO_DEVICE do-timestamp=true \ |

|||

! $VIDEO_CAPABILITIES \ |

|||

! videorate \ |

|||

! $VIDEO_CAPABILITIES \ |

|||

! videoconvert \ |

|||

! $VIDEO_CAPABILITIES \ |

|||

! jpegenc \ |

|||

! queue \ |

|||

! mux. \ |

|||

alsasrc device=$AUDIO_DEVICE \ |

|||

! $AUDIO_CAPABILITIES \ |

|||

! audiorate \ |

|||

! audioresample \ |

|||

! $AUDIO_CAPABILITIES, rate=44100 \ |

|||

! audioconvert \ |

|||

! $AUDIO_CAPABILITIES, rate=44100, channels=1 \ |

|||

! queue \ |

|||

! mux. \ |

|||

avimux name=mux ! filesink location=test-$( date --iso-8601=seconds ).avi |

|||

The chip that captures audio and video might not deliver the exact framerates specified, which the AVI format can't handle. The <code>audiorate</code> and <code>videorate</code> elements remove or duplicate frames to maintain a constant rate. |

|||

=== View pictures from a webcam (GStreamer 0.10) === |

|||

gst-launch-0.10 \ |

|||

v4l2src do-timestamp=true device=$VIDEO_DEVICE \ |

|||

! video/x-raw-yuv,format=\(fourcc\)UYVY,width=320,height=240 \ |

|||

! ffmpegcolorspace \ |

|||

! autovideosink |

|||

In GStreamer 0.10, ''videoconvert'' was called ''ffmpegcolorspace''. |

|||

=== Entrans: Record to DVD-compliant MPEG2 (GStreamer 0.10) === |

|||

entrans -s cut-time -c 0-180 -v -x '.*caps' --dam -- --raw \ |

|||

v4l2src queue-size=16 do-timestamp=true device=$VIDEO_DEVICE norm=PAL-BG num-buffers=-1 \ |

|||

! stamp silent=false progress=0 sync-margin=2 sync-interval=5 \ |

|||

! queue silent=false leaky=2 max-size-buffers=0 max-size-time=0 max-size-bytes=0 \ |

|||

! dam \ |

|||

! cogcolorspace \ |

|||

! videorate silent=false \ |

|||

! 'video/x-raw-yuv,width=720,height=576,framerate=25/1,interlaced=true,aspect-ratio=4/3' \ |

|||

! queue silent=false leaky=2 max-size-buffers=0 max-size-time=0 max-size-bytes=0 \ |

|||

! ffenc_mpeg2video rc-buffer-size=1500000 rc-max-rate=7000000 rc-min-rate=3500000 bitrate=4000000 max-key-interval=15 pass=pass1 \ |

|||

! queue silent=false leaky=2 max-size-buffers=0 max-size-time=0 max-size-bytes=0 \ |

|||

! mux. \ |

|||

pulsesrc buffer-time=2000000 do-timestamp=true \ |

|||

! queue silent=false leaky=2 max-size-buffers=0 max-size-time=0 max-size-bytes=0 \ |

|||

! dam \ |

|||

! audioconvert \ |

|||

! audiorate silent=false \ |

|||

! audio/x-raw-int,rate=48000,channels=2,depth=16 \ |

|||

! queue silent=false max-size-buffers=0 max-size-time=0 max-size-bytes=0 \ |

|||

! ffenc_mp2 bitrate=192000 \ |

|||

! queue silent=false leaky=2 max-size-buffers=0 max-size-time=0 max-size-bytes=0 \ |

|||

! mux. \ |

|||

ffmux_mpeg name=mux \ |

|||

! filesink location=test-$( date --iso-8601=seconds ).mpg |

|||

This captures 3 minutes (180 seconds, see first line of the command) to ''test-$( date --iso-8601=seconds ).mpg'' and even works for bad input signals. |

|||

* I wasn't able to figure out how to produce a mpeg with ac3-sound as neither ffmux_mpeg nor mpegpsmux support ac3 streams at the moment. mplex does but I wasn't able to get it working as one needs very big buffers to prevent the pipeline from stalling and at least my GStreamer build didn't allow for such big buffers. |

* I wasn't able to figure out how to produce a mpeg with ac3-sound as neither ffmux_mpeg nor mpegpsmux support ac3 streams at the moment. mplex does but I wasn't able to get it working as one needs very big buffers to prevent the pipeline from stalling and at least my GStreamer build didn't allow for such big buffers. |

||

* The limited buffer size on my system is again the reason why I had to add a third queue element to the middle of the audio as well as of the video part of the pipeline to prevent jerking. |

* The limited buffer size on my system is again the reason why I had to add a third queue element to the middle of the audio as well as of the video part of the pipeline to prevent jerking. |

||

| Line 85: | Line 307: | ||

* It seems to be important that the ''video/x-raw-yuv,width=720,height=576,framerate=25/1,interlaced=true,aspect-ratio=4/3''-statement is after ''videorate'' as videorate seems to drop the aspect-ratio-metadata otherwise resulting in files with aspect-ratio 1 in theis headers. Those files are probably played back warped and programs like dvdauthor complain. |

* It seems to be important that the ''video/x-raw-yuv,width=720,height=576,framerate=25/1,interlaced=true,aspect-ratio=4/3''-statement is after ''videorate'' as videorate seems to drop the aspect-ratio-metadata otherwise resulting in files with aspect-ratio 1 in theis headers. Those files are probably played back warped and programs like dvdauthor complain. |

||

=== |

=== Bash script to record video tapes with entrans === |

||

For most use cases, you'll want to wrap GStreamer in a larger shell script. This script protects against several common mistakes during encoding. |

|||

If you don't care for sound, this simple version works for uncompressed video: |

|||

See also [[V4L_capturing/script|the V4L capturing script]] for a a wrapper that represents a whole workflow. |

|||

gst-launch-0.10 v4l2src device=/dev/video5 ! video/x-raw-yuv,width=640,height=480 ! avimux ! \ |

|||

filesink location=test0.avi |

|||

tcprobe says this video-only file uses the I420 codec and gives the framerate as correct NTSC: |

|||

$ tcprobe -i test1.avi |

|||

[tcprobe] RIFF data, AVI video |

|||

[avilib] V: 29.970 fps, codec=I420, frames=315, width=640, height=480 |

|||

[tcprobe] summary for test1.avi, (*) = not default, 0 = not detected |

|||

import frame size: -g 640x480 [720x576] (*) |

|||

frame rate: -f 29.970 [25.000] frc=4 (*) |

|||

no audio track: use "null" import module for audio |

|||

length: 315 frames, frame_time=33 msec, duration=0:00:10.510 |

|||

The files will play in mplayer, using the codec [raw] RAW Uncompressed Video. |

|||

===Bash script to digitize video tapes easily=== |

|||

<nowiki>#!/bin/bash |

<nowiki>#!/bin/bash |

||

| Line 197: | Line 404: | ||

nice -n -10 entrans -s cut-time -c 0-$laenge -m --dam -- --raw \ |

nice -n -10 entrans -s cut-time -c 0-$laenge -m --dam -- --raw \ |

||

v4l2src queue-size=16 do-timestamp=true device= |

v4l2src queue-size=16 do-timestamp=true device=$VIDEO_DEVICE norm=PAL-BG num-buffers=-1 ! stamp sync-margin=2 sync-interval=5 silent=false progress=0 ! \ |

||

queue leaky=2 max-size-buffers=0 max-size-time=0 max-size-bytes=0 ! dam ! \ |

queue leaky=2 max-size-buffers=0 max-size-time=0 max-size-bytes=0 ! dam ! \ |

||

cogcolorspace ! videorate ! \ |

cogcolorspace ! videorate ! \ |

||

| Line 216: | Line 423: | ||

rm ~/.lock_shutdown.digitalisieren</nowiki> |

rm ~/.lock_shutdown.digitalisieren</nowiki> |

||

The script uses a command line similar to [[GStreamer#Record_to_DVD-compliant_MPEG2|this]] to produce a DVD compliant MPEG2 file. |

The script uses a command line similar to [[GStreamer#Record_to_DVD-compliant_MPEG2|this]] to produce a DVD compliant MPEG2 file. |

||

* The script |

* The script aborts if another instance is already running. |

||

* If not it asks for the length of the tape and its description |

* If not it asks for the length of the tape and its description |

||

* It records to ''description.mpg'' or if this file already exists to ''description.0.mpg'' and so on for the given time plus 10 minutes. The target-directory has to be specified in the beginning of the script. |

* It records to ''description.mpg'' or if this file already exists to ''description.0.mpg'' and so on for the given time plus 10 minutes. The target-directory has to be specified in the beginning of the script. |

||

* As setting of the inputs and settings of the capture device is only partly possible via GStreamer other tools are used. |

* As setting of the inputs and settings of the capture device is only partly possible via GStreamer other tools are used. |

||

* Adjust the settings to match your input sources, the recording volume, capturing saturation and so on. |

* Adjust the settings to match your input sources, the recording volume, capturing saturation and so on. |

||

====Record from a bad analog signal to MJPEG video and RAW audio==== |

|||

All advices read above still apply. However, in Gstreamer 1.0, stamp element does not exist anymore. |

|||

cogcolorspace and ffmpegcolorspace have been replaced by videoconvert. |

|||

gst-launch-1.0 -v avimux name=mux v4l2src device=/dev/video1 do-timestamp=true ! 'video/x-raw,format=(string)YV12,width=(int)720,height=(int)576' !\ |

|||

videorate ! 'video/x-raw,format=(string)YV12,framerate=25/1' ! videoconvert ! 'video/x-raw,format=(string)YV12,width=(int)720,height=(int)576' !\ |

|||

jpegenc ! queue ! mux. alsasrc device=hw:2,0 ! 'audio/x-raw,format=(string)S16LE,rate=(int)48000,channels=(int)2' !\ |

|||

audiorate ! audioresample ! 'audio/x-raw,rate=(int)44100' ! audioconvert ! 'audio/x-raw,channels=(int)1' ! queue ! mux. mux. ! filesink location=test.avi |

|||

This pipeline get video from V4L2 device "/dev/video1" (and compress it to MJPEG) and sound from ALSA device "hw:2,0" to record it in an AVI file. |

|||

Adapt inputs format according to your chip and proceed to transformation after videorate/audiorate. |

|||

As stated above, it is best to use both audiorate and videorate: you problably use the same chip to capture the audio and the video so the audio part is subject to disturbance as well. |

|||

==Using GStreamer to view pictures from a Webcam== |

|||

gst-launch-0.10 v4l2src use-fixed-fps=false ! video/x-raw-yuv,format=\(fourcc\)UYVY,width=320,height=240 \ |

|||

! ffmpegcolorspace ! ximagesink |

|||

gst-launch-0.10 v4lsrc autoprobe-fps=false device=/dev/video0 ! "video/x-raw-yuv, width=160, height=120, \ |

|||

framerate=10, format=(fourcc)I420" ! xvimagesink |

|||

==Converting formats== |

|||

To convert the files to matlab (didn't work for me): |

|||

mencoder test0.avi -ovc raw -vf format=bgr24 -o test0m.avi -ffourcc none |

|||

For details, see gst-launch and google; the plugins in particular are poorly documented so far. |

|||

==Further documentation resources== |

==Further documentation resources== |

||

* [[V4L_capturing|V4L Capturing]] |

|||

* [http://gstreamer.freedesktop.org/ Gstreamer project] |

* [http://gstreamer.freedesktop.org/ Gstreamer project] |

||

* [http://gstreamer.freedesktop.org/data/doc/gstreamer/head/faq/html/ FAQ] |

* [http://gstreamer.freedesktop.org/data/doc/gstreamer/head/faq/html/ FAQ] |

||

Latest revision as of 14:01, 11 March 2018

GStreamer is a toolkit for building audio- and video-processing pipelines. A pipeline might stream video from a file to a network, or add an echo to a recording, or (most interesting to us) capture the output of a Video4Linux device. Gstreamer is most often used to power graphical applications such as Totem, but can also be used directly from the command-line. This page will explain how GStreamer is better than the alternatives, and how to build an encoder using its command-line interface.

Before reading this page, see V4L capturing to set your system up and create an initial recording. This page assumes you have already implemented the simple pipeline described there.

Introduction to GStreamer

No two use cases for encoding are quite alike. What's your preferred workflow? Is your processor fast enough to encode high quality video in real-time? Do you have enough disk space to store the raw video then process it after the fact? Do you want to play your video in DVD players, or is it enough that it works in your version of VLC? How will you work around your system's obscure quirks?

Use GStreamer if you want the best video quality possible with your hardware, and don't mind spending a weekend browsing the Internet for information.

Avoid GStreamer if you just want something quick-and-dirty, or can't stand programs with bad documentation and unhelpful error messages.

Why is GStreamer better at encoding?

GStreamer isn't as easy to use as mplayer, and doesn't have as advanced editing functionality as ffmpeg. But it has superior support for synchronising audio and video in disturbed sources such as VHS tapes. If you specify your input is (say) 25 frames per second video and 48,000Hz audio, most tools will synchronise audio and video simply by writing 1 video frame, 1,920 audio frames, 1 video frame and so on. There are at least three ways this can cause errors:

- initialisation timing: audio and video desynchronised by a certain amount from the first frame, usually caused by audio and video devices taking different amounts of time to initialise. For example, the first audio frame might be delivered to GStreamer 0.01 seconds after it was requested, but the first video frame might not be delivered until 0.7 seconds after it was requested, causing all video to be 0.6 seconds behind the audio

mencoder's -delay option solves this by delaying the audio

- failure to encode: frames that desynchronise gradually over time, usually caused by audio and video shifting relative to each other when frames are dropped. For example if your CPU is not fast enough and sometimes drops a video frame, after 25 dropped frames the video will be one second ahead of the audio

mencoder's -harddup option solves this by duplicating other frames to fill in the gaps

- source frame rate: frames that aren't delivered at the advertised rate, usually caused by inaccurate clocks in the source hardware. For example, a low-cost webcam that advertises 25 FPS video and 48kHz audio might actually deliver 25.01 video frames and 47,999 audio frames per second, causing your audio and video to drift apart by a second or so per hour

- video tapes are especially problematic here - if you've ever seen a VCR struggle during those few seconds between two recordings on a tape, you've seen them adjusting the tape speed to accurately track the source. Frame counts can vary enough during these periods to instantly desynchronise audio and video

mencoderhas no solution for this problem

GStreamer solves these problems by attaching a timestamp to each incoming frame based on the time GStreamer receives the frame. It can then mux the sources back together accurately using these timestamps, either by using a format that supports variable framerates or by duplicating frames to fill in the blanks:

- If you choose a container format that supports timestamps (e.g. Matroska), timestamps are automatically written to the file and used to vary the playback speed

- If you choose a container format that does not support timestamps (e.g. AVI), you must duplicate other frames to fill in the gaps by adding the

videorateandaudiorateplugins to the end of the relevant pipelines

Getting GStreamer

GStreamer, its most common plugins and tools are available through your distribution's package manager. Most Linux distributions include both the legacy 0.10 and modern 1.0 release series - each has bugs that stop them from working on some hardware, and this page focuses mostly on the modern 1.0 series. Converting between 0.10 and 1.0 is mostly just search-and-replace work (e.g. changing instances of av to ff because of the switch from ffmpeg to libavcodec). See the porting guide for more.

Other plugins are also available, such as GEntrans (used in some examples below). Google might help you find packages for your distribution, otherwise you'll need to download and compile them yourself.

Using GStreamer with gst-launch-1.0

gst-launch is the standard command-line interface to GStreamer. Here's the simplest pipline you can build:

gst-launch-1.0 fakesrc ! fakesink

This connects a single (fake) source to a single (fake) sink using the 1.0 series of GStreamer:

GStreamer can build all kinds of pipelines, but you probably want to build one that looks something like this:

To get a list of elements that can go in a GStreamer pipeline, do:

gst-inspect-1.0 | less

Pass an element name to gst-inspect-1.0 for detailed information. For example:

gst-inspect-1.0 fakesrc gst-inspect-1.0 fakesink

The images above are based on graphs created by GStreamer itself. Install Graphviz to build graphs of your pipelines. For faster viewing of those graphs, you may install xdot from [1]:

mkdir gst-visualisations GST_DEBUG_DUMP_DOT_DIR=gst-visualisations gst-launch-1.0 fakesrc ! fakesink xdot gst-visualisations/*-gst-launch.*_READY.dot

You may also compiles those graph to PNG, SVG or other supported formats:

dot -Tpng gst-visualisations/*-gst-launch.*_READY.dot > my-pipeline.png

To get graphs of the example pipelines below, prepend GST_DEBUG_DUMP_DOT_DIR=gst-visualisations to the gst-launch-1.0 command. Run this command to generate a graph of GStreamer's most interesting stage:

xdot gst-visualisations/*-gst-launch.PLAYING_READY.dot

Remember to empty the gst-visualisations directory between runs.

Using GStreamer with entrans

gst-launch-1.0 is the main command-line interface to GStreamer, available by default. But entrans is a bit smarter:

- it provides partly-automated composition of GStreamer pipelines

- it allows you to cut streams, for example to capture for a predefined duration. That ensures headers are written correctly, which is not always the case if you close

gst-launch-1.0by pressing Ctrl+C. To use this feature one has to insert a dam element after the first queue of each part of the pipeline

Building pipelines

You will probably need to build your own GStreamer pipeline for your particular use case. This section contains examples to give you the basic idea.

Note: for consistency and ease of copy/pasting, all filenames in this section are of the form test-$( date --iso-8601=seconds ) - your shell should automatically convert this to e.g. test-2010-11-12T13:14:15+1600.avi

Record raw video only

A simple pipeline that initialises one video source, sets the video format, muxes it into a file format, then saves it to a file:

gst-launch-1.0 \

v4l2src device=$VIDEO_DEVICE \

! $VIDEO_CAPABILITIES \

! avimux \

! filesink location=test-$( date --iso-8601=seconds ).avi

This will create an AVI file with raw video and no audio. It should play in most software, but the file will be huge.

Record raw audio only

A simple pipeline that initialises one audio source, sets the audio format, muxes it into a file format, then saves it to a file:

gst-launch-1.0 \

alsasrc device=$AUDIO_DEVICE \

! $AUDIO_CAPABILITIES \

! avimux \

! filesink location=test-$( date --iso-8601=seconds ).avi

This will create an AVI file with raw audio and no video.

Record video and audio

gst-launch-1.0 \

v4l2src device=$VIDEO_DEVICE \

! $VIDEO_CAPABILITIES \

! mux. \

alsasrc device=$AUDIO_DEVICE \

! $AUDIO_CAPABILITIES \

! mux. \

avimux name=mux \

! filesink location=test-$( date --iso-8601=seconds ).avi

Instead of a straightforward pipe with a single source leading into a muxer, this pipe has three parts:

- a video source leading to a named element (

! name.with a full stop means "pipe to the name element") - an audio source leading to the same element

- a named muxer element leading to a file sink

Muxers combine data from many inputs into a single output, allowing you to build quite flexible pipes.

Create multiple sinks

The tee element splits a single source into multiple outputs:

gst-launch-1.0 \

v4l2src device=$VIDEO_DEVICE \

! $VIDEO_CAPABILITIES \

! avimux \

! tee name=network \

! filesink location=test-$( date --iso-8601=seconds ).avi \

tcpclientsink host=127.0.0.1 port=5678

This sends your stream to a file (filesink) and out over the network (tcpclientsink). To make this work, you'll need another program listening on the specified port (e.g. nc -l 127.0.0.1 -p 5678).

Encode audio and video

As well as piping streams around, GStreamer can manipulate their contents. The most common manipulation is to encode a stream:

gst-launch-1.0 \

v4l2src device=$VIDEO_DEVICE \

! $VIDEO_CAPABILITIES \

! videoconvert \

! theoraenc \

! queue \

! mux. \

alsasrc device=$AUDIO_DEVICE \

! $AUDIO_CAPABILITIES \

! audioconvert \

! vorbisenc \

! mux. \

oggmux name=mux \

! filesink location=test-$( date --iso-8601=seconds ).ogg

The theoraenc and vorbisenc elements encode the video and audio using Ogg Theora and Ogg Vorbis encoders. The pipes are then muxed together into an Ogg container before being saved.

Add buffers

Different elements work at different speeds. For example, a CPU-intensive encoder might fall behind when another process uses too much processor time, or a duplicate frame detector might hold frames back while it examines them. This can cause streams to fall out of sync, or frames to be dropped altogether. You can add queues to smooth these problems out:

gst-launch-1.0 -q -e \

v4l2src device=$VIDEO_DEVICE \

! queue max-size-buffers=0 max-size-time=0 max-size-bytes=0 \

! $VIDEO_CAPABILITIES \

! videoconvert \

! x264enc interlaced=true pass=quant quantizer=0 speed-preset=ultrafast byte-stream=true \

! progressreport update-freq=1 \

! mux. \

alsasrc device=$AUDIO_DEVICE \

! queue max-size-buffers=0 max-size-time=0 max-size-bytes=0 \

! $AUDIO_CAPABILITIES \

! audioconvert \

! flacenc \

! mux. \

matroskamux name=mux min-index-interval=1000000000 \

! queue max-size-buffers=0 max-size-time=0 max-size-bytes=0 \

! filesink location=test-$( date --iso-8601=seconds ).mkv

This creates a file using FLAC audio and x264 video in lossless mode, muxed into in a Matroska container. Because we used speed-preset=ultrafast, the buffers should just smooth out the flow of frames through the pipelines. Even though the buffers are set to the maximum possible size, speed-preset=veryslow would eventually fill the video buffer and start dropping frames.

Some other things to note about this pipeline:

- FFmpeg's H.264 page includes a useful discussion of speed presets (both programs use the same underlying library)

quantizer=0sets the video codec to lossless mode (~30GB/hour). Anything up toquantizer=18should not lose information visible to the human eye, and will produce much smaller files (~10GB/hour)min-index-interval=1000000000improves seek times by telling the Matroska muxer to create one cue data entry per second of playback. Cue data is a few kilobytes per hour, added to the end of the file when encoding completes. If you try to watch your Matroska video while it's being recorded, it will take a long time to skip forward/back because the cue data hasn't been written yet

Common caputuring issues and their solutions

Reducing Jerkiness

If motion that should appear smooth instead stops and starts, try the following:

Check for muxer issues. Some muxers need big chunks of data, which can cause one stream to pause while it waits for the other to fill up. Change your pipeline to pipe your audio and video directly to their own filesinks - if the separate files don't judder, the muxer is the problem.

- If the muxer is at fault, add ! queue max-size-buffers=0 max-size-time=0 max-size-bytes=0 immediately before each stream goes to the muxer

- queues have hard-coded maximum sizes - you can chain queues together if you need more buffering than one buffer can hold

Check your CPU load. When GStreamer uses 100% CPU, it may need to drop frames to keep up.

- If frames are dropped occasionally when CPU usage spikes to 100%, add a (larger) buffer to help smooth things out.

- this can be a source's internal buffer (e.g. alsasrc buffer-time=2000000), or it can be an extra buffering step in your pipeline (! queue max-size-buffers=0 max-size-time=0 max-size-bytes=0)

- If frames are dropped when other processes have high CPU load, consider using nice to make sure encoding gets CPU priority

- If frames are dropped regularly, use a different codec, change the parameters, lower the resolution, or otherwise choose a less resource-intensive solution

As a general rule, you should try increasing buffers first - if it doesn't work, it will just increase the pipeline's latency a bit. Be careful with nice, as it can slow down or even halt your computer.

Check for incorrect timestamps. If your video driver works by filling up an internal buffer then passing a cluster of frames without timestamps, GStreamer will think these should all have (nearly) the same timestamp. Make sure you have a videorate element in your pipeline, then add silent=false to it. If it reports many framedrops and framecopies even when the CPU load is low, the driver is probably at fault.

videorateon its own will actually make this problem worse by picking one frame and replacing all the others with it. Instead installentransand add its stamp element between v4l2src and queue (e.g. v4l2src do-timestamp=true ! stamp sync-margin=2 sync-interval=5 ! videorate ! queue)- stamp intelligently guesses timestamps if drivers don't support timestamping. Its sync- options drop or copy frames to get a nearly-constant framerate. Using

videorateas well does no harm and can solve some remaining problems

- stamp intelligently guesses timestamps if drivers don't support timestamping. Its sync- options drop or copy frames to get a nearly-constant framerate. Using

Avoiding pitfalls with video noise

If your video contains periods of video noise (snow), you may need to deal with some extra issues:

- Most devices send an EndOfStream signal if the input signal quality drops too low, causing GStreamer to finish capturing. To prevent the device from sending EOS, set num-buffers=-1 on the v4l2src element.

- The stamp plugin gets confused by periods of snow, causing it to generate faulty timestamps and framedropping. stamp will recover normal behaviour when the break is over, but will probably leave the buffer full of weirdly-stamped frames. stamp only drops one weirdly-stamped frame each sync-interval, so it can take several minutes until everything works fine again. To solve this problem, set leaky=2 on each queue element to allow dropping old frames

- Periods of noise (snow, bad signal etc.) are hard to encode. Variable bitrate encoders will often drive up the bitrate during the noise then down afterwards to maintain the average bitrate. To minimise the issues, specify a minimum and maximum bitrate in your encoder

- Snow at the start of a recording is just plain ugly. To get black input instead from a VCR, use the remote control to change the input source before you start recording

Investigating bugs in GStreamer

GStreamer comes with a extensive tracing system that let you figure-out the problems. Yet, you often need to understand the internals of GStreamer to be able to read those traces. You should read this documentation page for the basic of how the tracing system works. When something goes wrong you should:

- try and see if there is a useful error message by enabling the ERROR debug level,

GST_DEBUG=2 gst-launch-1.0 - try similar pipelines - reducing to its most minimal form, and add more elements until you can reproduce the issue.

- as you are most likely having issue with V4L2 element, you may enable full v4l2 traces using

GST_DEBUG="v4l2*:7,2" gst-launch-1.0. - find an error message that looks relevant, search the Internet for information about it

- try more variations based on what you learnt, until you eventually find something that works

- ask on Freenode #gstreamer or through GStreamer Mailing List

- if you think you found a bug, you should report it through Gnome Bugzilla

Sample pipelines

record from a bad analog signal to MJPEG video and RAW mono audio

gst-launch-1.0 \

v4l2src device=$VIDEO_DEVICE do-timestamp=true \

! $VIDEO_CAPABILITIES \

! videorate \

! $VIDEO_CAPABILITIES \

! videoconvert \

! $VIDEO_CAPABILITIES \

! jpegenc \

! queue \

! mux. \

alsasrc device=$AUDIO_DEVICE \

! $AUDIO_CAPABILITIES \

! audiorate \

! audioresample \

! $AUDIO_CAPABILITIES, rate=44100 \

! audioconvert \

! $AUDIO_CAPABILITIES, rate=44100, channels=1 \

! queue \

! mux. \

avimux name=mux ! filesink location=test-$( date --iso-8601=seconds ).avi

The chip that captures audio and video might not deliver the exact framerates specified, which the AVI format can't handle. The audiorate and videorate elements remove or duplicate frames to maintain a constant rate.

View pictures from a webcam (GStreamer 0.10)

gst-launch-0.10 \

v4l2src do-timestamp=true device=$VIDEO_DEVICE \

! video/x-raw-yuv,format=\(fourcc\)UYVY,width=320,height=240 \

! ffmpegcolorspace \

! autovideosink

In GStreamer 0.10, videoconvert was called ffmpegcolorspace.

Entrans: Record to DVD-compliant MPEG2 (GStreamer 0.10)

entrans -s cut-time -c 0-180 -v -x '.*caps' --dam -- --raw \

v4l2src queue-size=16 do-timestamp=true device=$VIDEO_DEVICE norm=PAL-BG num-buffers=-1 \

! stamp silent=false progress=0 sync-margin=2 sync-interval=5 \

! queue silent=false leaky=2 max-size-buffers=0 max-size-time=0 max-size-bytes=0 \

! dam \

! cogcolorspace \

! videorate silent=false \

! 'video/x-raw-yuv,width=720,height=576,framerate=25/1,interlaced=true,aspect-ratio=4/3' \

! queue silent=false leaky=2 max-size-buffers=0 max-size-time=0 max-size-bytes=0 \

! ffenc_mpeg2video rc-buffer-size=1500000 rc-max-rate=7000000 rc-min-rate=3500000 bitrate=4000000 max-key-interval=15 pass=pass1 \

! queue silent=false leaky=2 max-size-buffers=0 max-size-time=0 max-size-bytes=0 \

! mux. \

pulsesrc buffer-time=2000000 do-timestamp=true \

! queue silent=false leaky=2 max-size-buffers=0 max-size-time=0 max-size-bytes=0 \

! dam \

! audioconvert \

! audiorate silent=false \

! audio/x-raw-int,rate=48000,channels=2,depth=16 \

! queue silent=false max-size-buffers=0 max-size-time=0 max-size-bytes=0 \

! ffenc_mp2 bitrate=192000 \

! queue silent=false leaky=2 max-size-buffers=0 max-size-time=0 max-size-bytes=0 \

! mux. \

ffmux_mpeg name=mux \

! filesink location=test-$( date --iso-8601=seconds ).mpg

This captures 3 minutes (180 seconds, see first line of the command) to test-$( date --iso-8601=seconds ).mpg and even works for bad input signals.

- I wasn't able to figure out how to produce a mpeg with ac3-sound as neither ffmux_mpeg nor mpegpsmux support ac3 streams at the moment. mplex does but I wasn't able to get it working as one needs very big buffers to prevent the pipeline from stalling and at least my GStreamer build didn't allow for such big buffers.

- The limited buffer size on my system is again the reason why I had to add a third queue element to the middle of the audio as well as of the video part of the pipeline to prevent jerking.

- In many HOWTOs you find ffmpegcolorspace instead of cogcolorspace. You can even use this but cogcolorspace is much faster.

- It seems to be important that the video/x-raw-yuv,width=720,height=576,framerate=25/1,interlaced=true,aspect-ratio=4/3-statement is after videorate as videorate seems to drop the aspect-ratio-metadata otherwise resulting in files with aspect-ratio 1 in theis headers. Those files are probably played back warped and programs like dvdauthor complain.

Bash script to record video tapes with entrans

For most use cases, you'll want to wrap GStreamer in a larger shell script. This script protects against several common mistakes during encoding.

See also the V4L capturing script for a a wrapper that represents a whole workflow.

#!/bin/bash

targetdirectory="~/videos"

# Test ob doppelt geöffnet

if [[ -e "~/.lock_shutdown.digitalisieren" ]]; then

echo ""

echo ""

echo "Capturing already running. It is impossible to capture to tapes simultaneously. Hit a key to abort."

read -n 1

exit

fi

# trap keyboard interrupt (control-c)

trap control_c 0 SIGHUP SIGINT SIGQUIT SIGABRT SIGKILL SIGALRM SIGSEGV SIGTERM

control_c()

# run if user hits control-c

{

cleanup

exit $?

}

cleanup()

{

rm ~/.lock_shutdown.digitalisieren

return $?

}

touch "~/.lock_shutdown.digitalisieren"

echo ""

echo ""

echo "Please enter the length of the tape in minutes and press ENTER. (Press Ctrl+C to abort.)"

echo ""

while read -e laenge; do

if [[ $laenge == [0-9]* ]]; then

break 2

else

echo ""

echo ""

echo "That's not a number."

echo "Please enter the length of the tape in minutes and press ENTER. (Press Ctrl+C to abort.)"

echo ""

fi

done

let laenge=laenge+10 # Sicherheitsaufschlag, falls Band doch länger

let laenge=laenge*60

echo ""

echo ""

echo "Please type in the description of the tape."

echo "Don't forget to rewind the tape?"

echo "Hit ENTER to start capturing. Press Ctrl+C to abort."

echo ""

read -e name;

name=${name//\//_}

name=${name//\"/_}

name=${name//:/_}

# Falls Name schon vorhanden

if [[ -e "$targetdirectory/$name.mpg" ]]; then

nummer=0

while [[ -e "$targetdirectory/$name.$nummer.mpg" ]]; do

let nummer=nummer+1

done

name=$name.$nummer

fi

# Audioeinstellungen setzen: unmuten, Regler

amixer -D pulse cset name='Capture Switch' 1 >& /dev/null # Aufnahme-Kanal einschalten

amixer -D pulse cset name='Capture Volume' 20724 >& /dev/null # Aufnahme-Pegel einstellen

# Videoinput auswählen und Karte einstellen

v4l2-ctl --set-input 3 >& /dev/null

v4l2-ctl -c saturation=80 >& /dev/null

v4l2-ctl -c brightness=130 >& /dev/null

let ende=$(date +%s)+laenge

echo ""

echo "Working"

echo "Capturing will be finished at "$(date -d @$ende +%H.%M)"."

echo ""

echo "Press Ctrl+C to finish capturing now."

nice -n -10 entrans -s cut-time -c 0-$laenge -m --dam -- --raw \

v4l2src queue-size=16 do-timestamp=true device=$VIDEO_DEVICE norm=PAL-BG num-buffers=-1 ! stamp sync-margin=2 sync-interval=5 silent=false progress=0 ! \

queue leaky=2 max-size-buffers=0 max-size-time=0 max-size-bytes=0 ! dam ! \

cogcolorspace ! videorate ! \

'video/x-raw-yuv,width=720,height=576,framerate=25/1,interlaced=true,aspect-ratio=4/3' ! \

queue leaky=2 max-size-buffers=0 max-size-time=0 max-size-bytes=0 ! \

ffenc_mpeg2video rc-buffer-size=1500000 rc-max-rate=7000000 rc-min-rate=3500000 bitrate=4000000 max-key-interval=15 pass=pass1 ! \

queue leaky=2 max-size-buffers=0 max-size-time=0 max-size-bytes=0 ! mux. \

pulsesrc buffer-time=2000000 do-timestamp=true ! \

queue leaky=2 max-size-buffers=0 max-size-time=0 max-size-bytes=0 ! dam ! \

audioconvert ! audiorate ! \

audio/x-raw-int,rate=48000,channels=2,depth=16 ! \

queue max-size-buffers=0 max-size-time=0 max-size-bytes=0 ! \

ffenc_mp2 bitrate=192000 ! \

queue leaky=2 max-size-buffers=0 max-size-time=0 max-size-bytes=0 ! mux. \

ffmux_mpeg name=mux ! filesink location=\"$targetdirectory/$name.mpg\" >& /dev/null

echo "Finished Capturing"

rm ~/.lock_shutdown.digitalisieren

The script uses a command line similar to this to produce a DVD compliant MPEG2 file.

- The script aborts if another instance is already running.

- If not it asks for the length of the tape and its description

- It records to description.mpg or if this file already exists to description.0.mpg and so on for the given time plus 10 minutes. The target-directory has to be specified in the beginning of the script.

- As setting of the inputs and settings of the capture device is only partly possible via GStreamer other tools are used.

- Adjust the settings to match your input sources, the recording volume, capturing saturation and so on.

Further documentation resources

- V4L Capturing

- Gstreamer project

- FAQ

- Documentation

- man gst-launch

- entrans command line tool documentation

- gst-inspect plugin-name